Across the EU, efforts to govern AI are rapidly advancing, yet a more fundamental issue hasn’t yet been fully addressed. Digital identity is one of the foundational ordering principles of modern digital societies, determining who can act, under what authority, and how accountable they are within digital systems.

For decades, this architecture has been built around a simple premise: only humans and legally constituted organisations can participate in the digital environment.

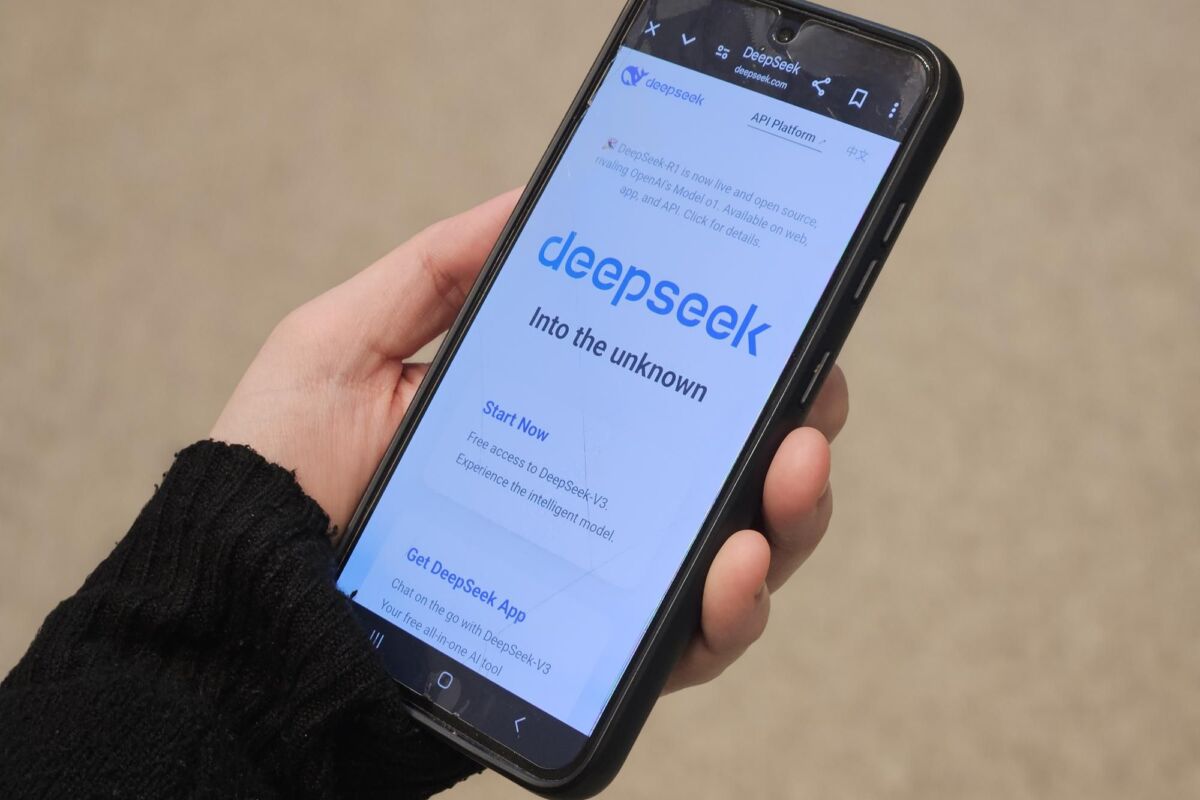

However, this premise has become increasingly strained in a digital environment that’s no longer solely composed of human actors – and that’s why the EU must begin working on a dedicated digital identity infrastructure for AI agents.

An increasingly old-fashioned foundation

European digital governance frameworks have developed within this human-centred model, where each law addresses a specific layer of the ecosystem. The updated eIDAS framework (2.0) has established what constitutes a ‘trusted’ digital identity, allowing for secure authentication and legally recognised digital transactions.

Building on this, several other legislative pieces assume that every individual will have a trusted digital identity and that they’ll be easily identifiable and authenticated when engaging in digital interactions. One example is that the Digital Services Act should be interoperable with the European Digital Identity Wallet for user authentication. And the AI Act introduces transparency obligations requiring certain AI-generated outputs to be disclosed or clearly identifiable, helping people to recognise such content.

Taken together, these frameworks regulate identities, platforms and AI tools; yet they remain centred on human participation and human interactions with systems and content.

But this isn’t the case anymore. AI agents are already acting on humans’ behalf, exchanging data with humans (and with each other), negotiating services, producing information and increasingly interacting with physical infrastructure.

These developments are now challenging the legacy human-centred approach to digital identity. When an autonomous AI agent performs an action in the digital – or even the physical – world, how can it be reliably attributed or verified? And who is ultimately accountable?

Without reliable mechanisms to record and verify agent activity, societies lack the necessary evidence to understand and govern the behaviour of autonomous systems. Policymakers can’t meaningfully assess risks, assign responsibility or design safeguards if autonomous agents’ actions remain opaque.

Infrastructure is needed for effective governance. While regulation defines permitted conduct, infrastructure determines whether actions can be attributed, interactions authenticated and decisions contested. Without an underlying layer that makes autonomous agents identifiable and traceable, any rules governing their behaviour aren’t reliable. Current frameworks simply don’t address how agents should appear as accountable actors within digital interactions – and such an omission needs to be addressed.

We need a new framework

The challenge becomes even more visible when looking at the European Commission’s new Apply AI Strategy, which aims to accelerate AI’s deployment and adoption across Europe’s economy and public sector. As various initiatives encourage the development of increasingly autonomous systems, the number of interactions between software agents, services and physical infrastructure will only grow. Ensuring these interactions remain attributable and verifiable becomes essential for maintaining trust in increasingly automated environments.

On the longer horizon, the inability to reliably attribute and verify autonomous agents’ actions in digital environments will also directly impact democratic cohesion and content moderation. Without ways to identify origin and authority, coordinated manipulation and even interference becomes difficult to detect and contest, and individuals may not be able to verify information presented to them or challenge automated decisions.

That’s why we need new layers of digital trust infrastructure capable of binding actions to identifiable actors and linking events to verifiable context. This means cryptographically anchored identities for AI agents, secure protocols governing interactions between humans and agents, and mechanisms capable of certifying real-world context.

Without these mechanisms, the agentic internet (an emerging part of the internet where AI agents act on users’ behalf) could evolve into an environment where impersonation, unverifiable automation and synthetic evidence become structurally indistinguishable from legitimate actions.

Europe must design and deploy a digital identity infrastructure for AI agents, where they can appear and operate within digital interactions as both identifiable and verifiable actors.

This is an absolute must for ensuring accountability, contestability and information integrity. If Europe succeeds, it won’t only govern AI systems, but it’ll also help shape the trust in infrastructure which future autonomous digital societies will depend on.